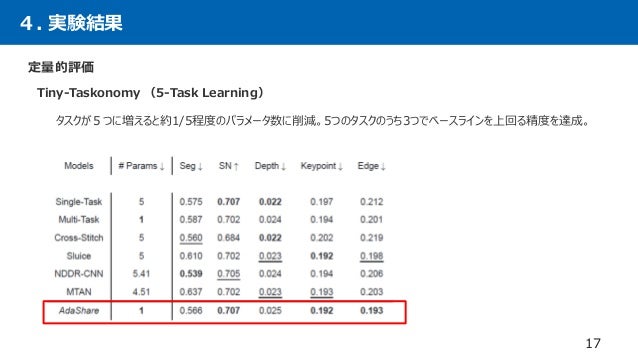

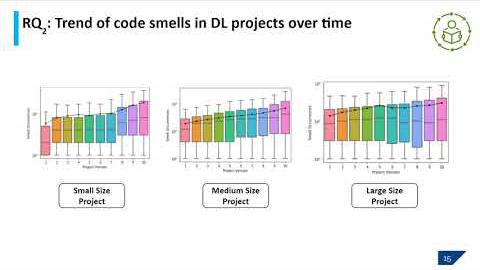

3Task Learning and TinyTaskonomy 5Task Learning (see Table 24) E FLOPs and Inference Time We report FLOPs of different multitask learning baselines and their inference time for all tasks of a single image Table 5 shows that AdaShareDec 13, 19 · Deep Learning JP Discover the Gradient Search home members;Multitask setup Multitask formulations of machine learning problems allow for joint learning of multiple objectives, exploiting differences and commonalities between objectives to improve performance over separate formulations We designed our deep learning architecture to jointly train on both passive and active consumption objectives, with

Auto Virtualnet Cost Adaptive Dynamic Architecture Search For Multi Task Learning Sciencedirect

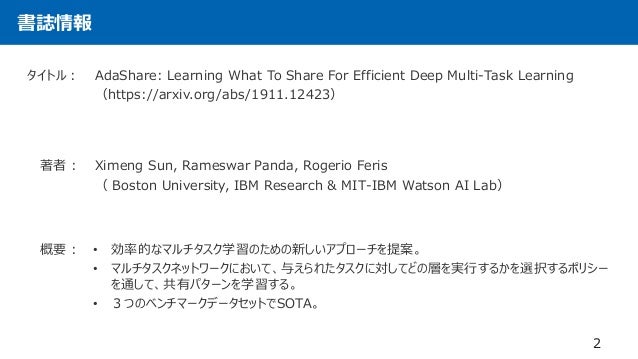

Adashare learning what to share for efficient deep multi-task learning

Adashare learning what to share for efficient deep multi-task learning-Machine Learning Deep LearningRameswar Panda Research Staff Member, MITIBM Watson AI Lab Verified email at ibmcom Homepage Computer Vision Machine Learning Artificial Intelligence Articles Cited by Public access Coauthors

Adashare Learning What To Share For Efficient Deep Multi Task Learning Deepai

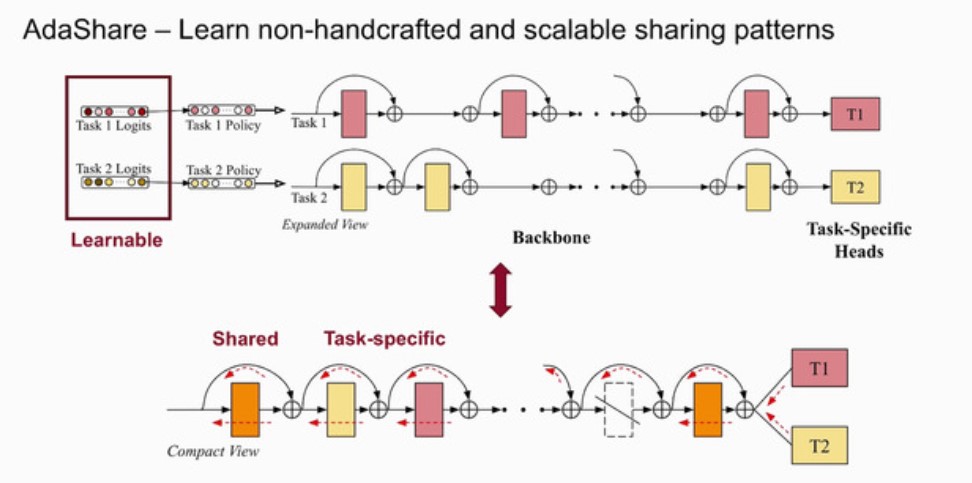

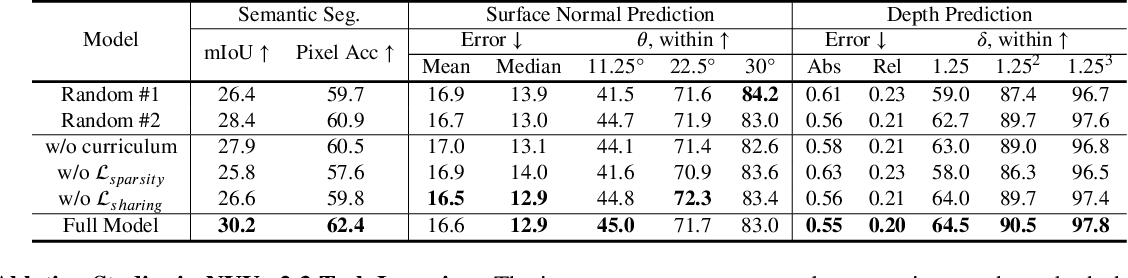

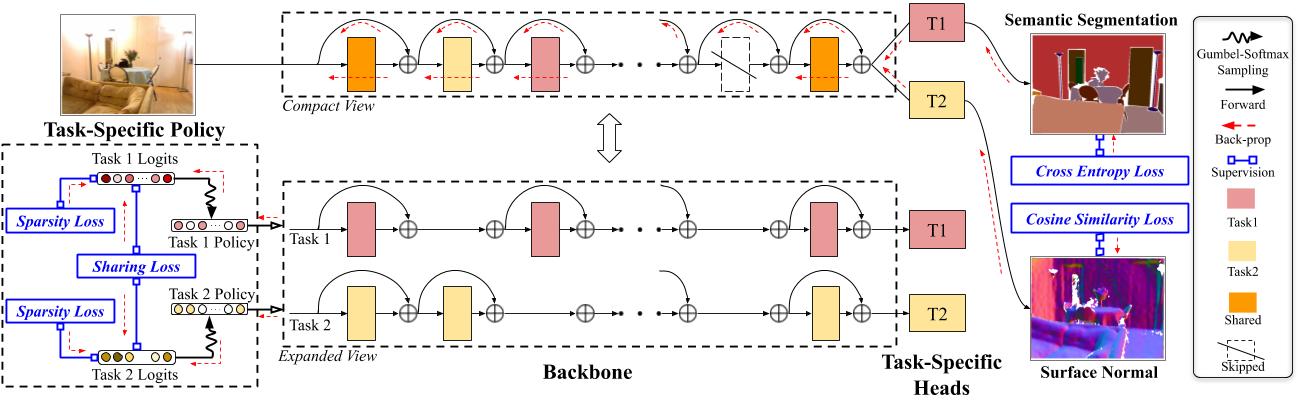

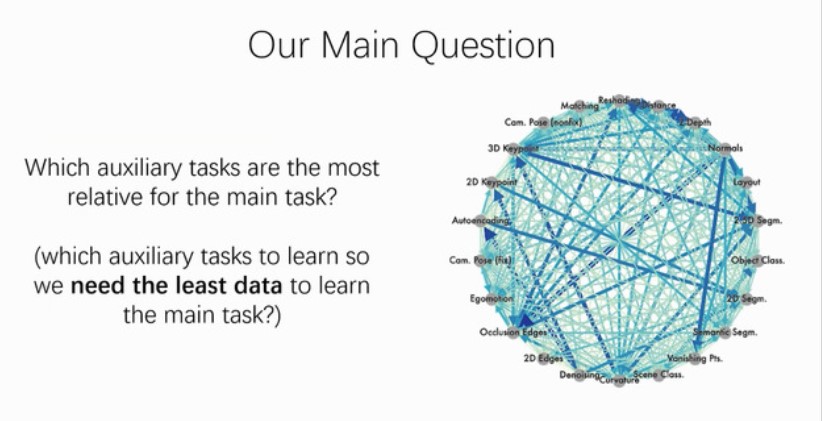

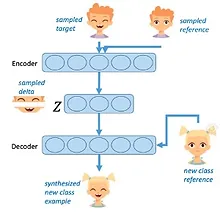

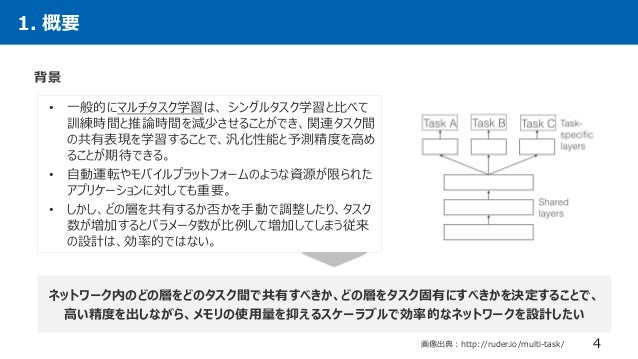

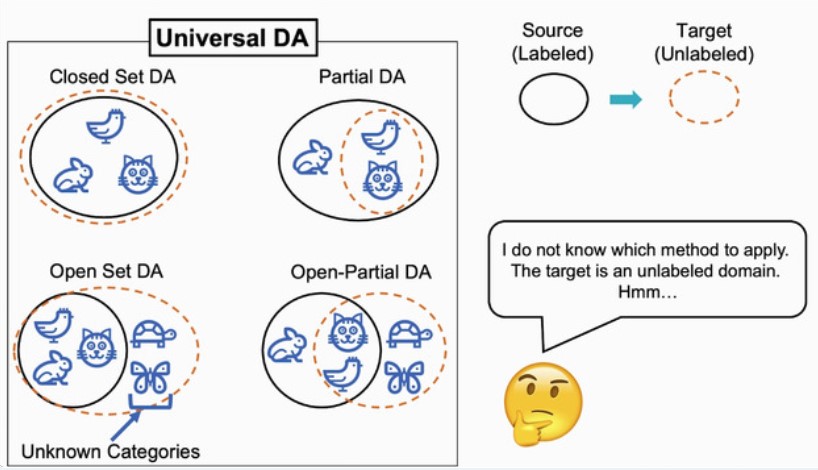

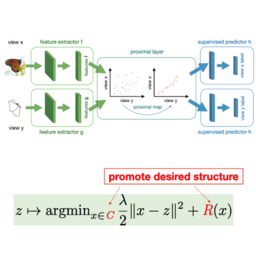

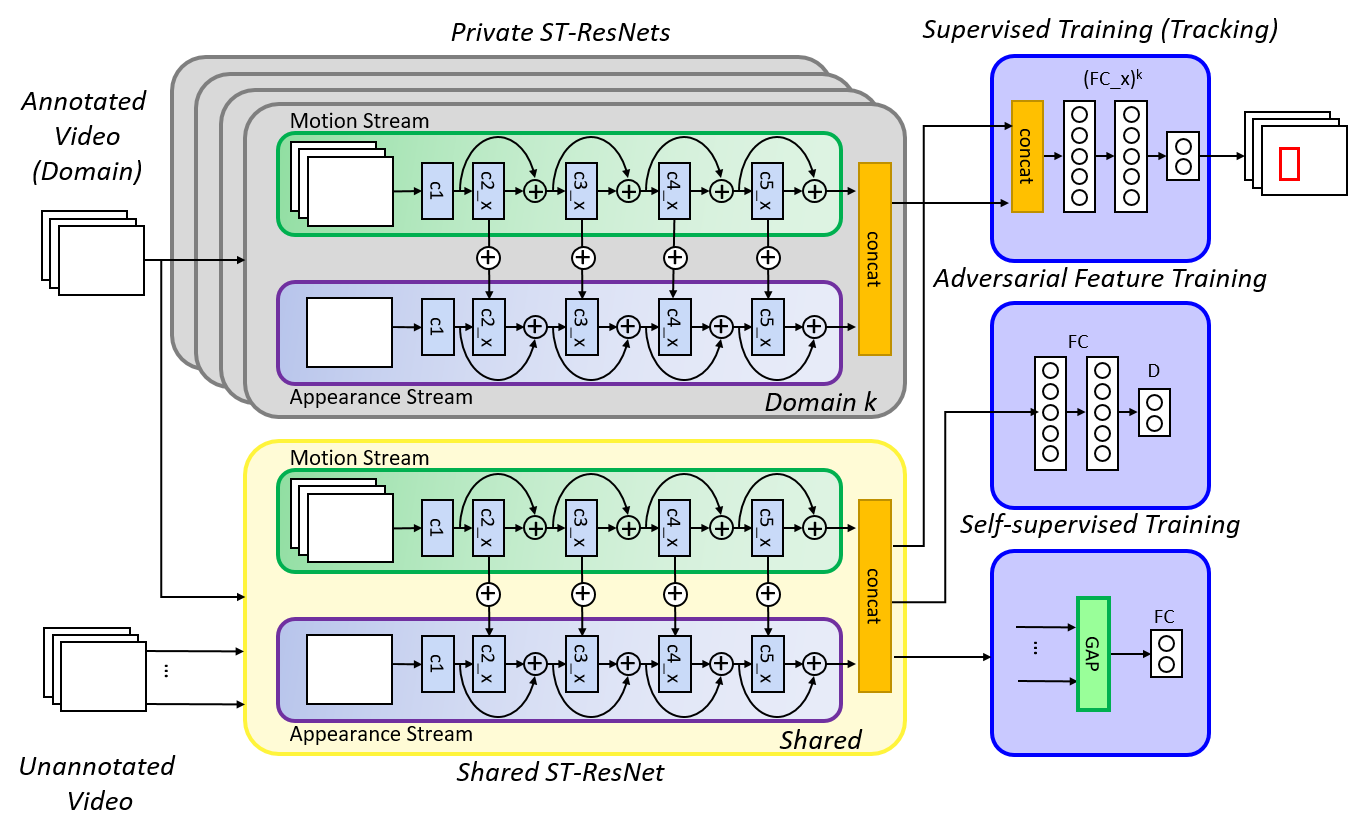

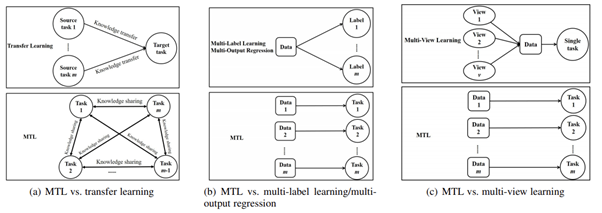

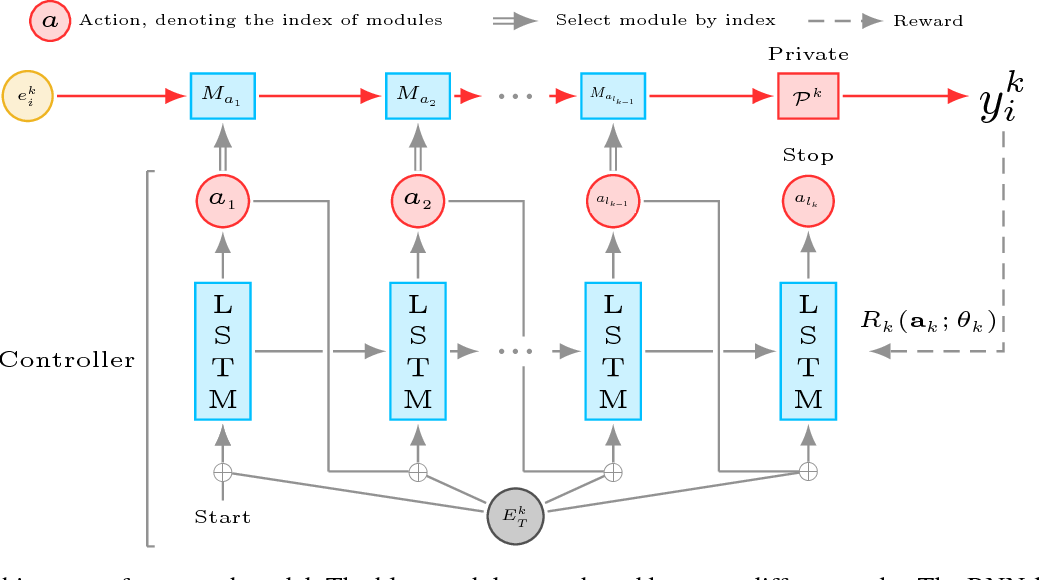

The typical way of conducting multitask learning with deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we propose an adaptive sharing approach, called AdaShare, that decides whatApr 28, · With the advent of deep learning, many dense prediction tasks, ie tasks that produce pixellevel predictions, have seen significant performance improvements The typical approach is to learn these tasks in isolation, that is, a separate neural network is trained for each individual task Yet, recent multitask learning (MTL) techniques have shown promising resultsFeb 29, · In multitask learning, the sharing of information between related tasks affects and promotes the learning of each task However, the traditional multitask learning techniques always require sufficient labeled data to improve the learning of each task, and labeling samples is always expensive in practice In this paper, we propose two variants

6 Dec Managing Misinformation About Science;May 29, 17 · An Overview of MultiTask Learning in Deep Neural Networks Multitask learning is becoming more and more popular This post gives a general overview of the current state of multitask learning In particular, it provides context for current neural networkbased methods by discussing the extensive multitask learning literature Sebastian Ruder9 Dec AIR Seminar "AdaShare Learning What To Share For Efficient Deep MultiTask Learning" 9 Dec Poster Session "Computational Tools for Data Science"

AdaShare Learning What To Share For Efficient Deep MultiTask Learning X Sun, R Panda, R Feris, and K Saenko NeurIPS See also Fullyadaptive Feature Sharing in MultiTask Networks (CVPR 17) Project PageMoreover, we provide a joint deep multitask learning framework to combine taskspecific module and relational attention module Finally, we apply our method on a multicriteria business success assessment problem, both classical and the stateoftheart multitask learning methods are employed to provide baseline performanceWe propose a novel multitask learning architecture, which allows learning of taskspecific featurelevel attention Our design, the MultiTask Attention Network (MTAN), consists of a single shared network containing a global feature pool, together with a softattention module for each task These modules allow for learning of taskspecific features from the global features, whilst

Learning To Branch For Multi Task Learning Deepai

Auto Virtualnet Cost Adaptive Dynamic Architecture Search For Multi Task Learning Sciencedirect

AdaShare Learning What To Share For Efficient Deep MultiTask Learning Meta Review A good paper that presents a new multitask learning method The reviewers agree that the paper is well written and the results support the main claim of the paper The reviewers have some concerns regarding the applicability of the proposed method to otherAdaShare Learning What To Share For Efficient Deep MultiTask Learning AdaShare Learning What To Share For Efficient Deep MultiTask Learning Computer Vision Multimodal Learning Approximate CrossValidation for Structured Models Approximate CrossValidation for Structured Models9 Dec AIR Seminar "AdaShare Learning What To Share For Efficient Deep MultiTask Learning" 9 Dec Poster Session "Computational Tools for Data Science" 10 Dec Writing Business Proposals – A Seminar for Faculty

Dl輪読会 Adashare Learning What To Share For Efficient Deep Multi Task

Adashare Learning What To Share For Efficient Deep Multi Task Learning Arxiv Vanity

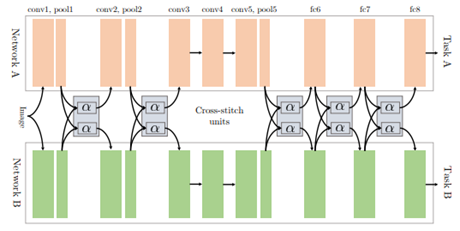

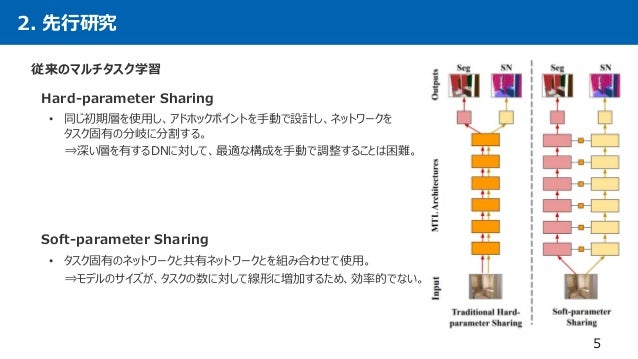

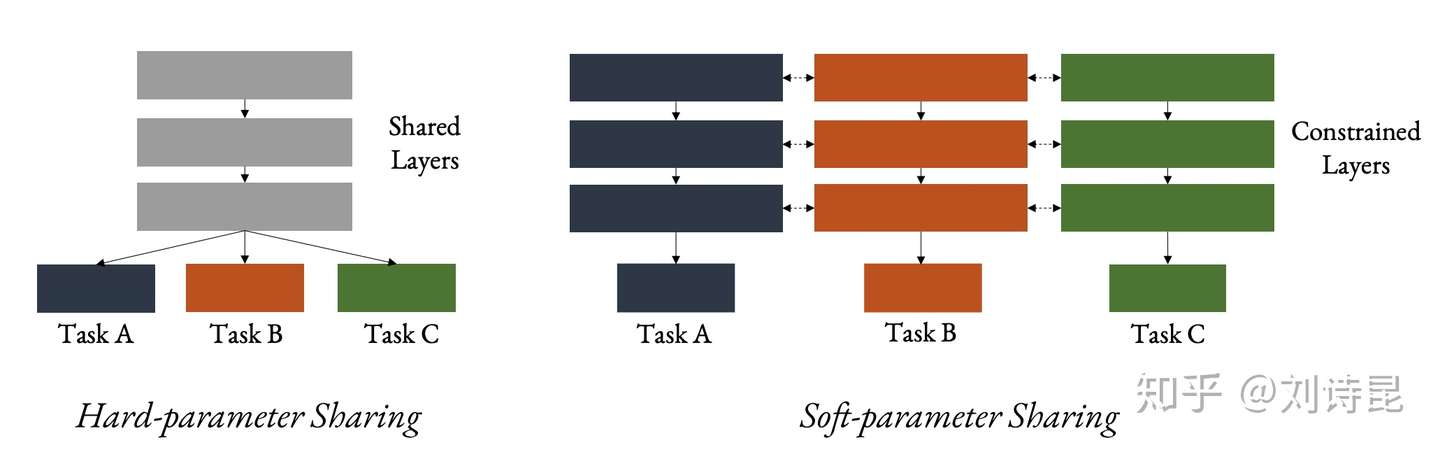

Meta MultiTask Learning (Ruder et al,19) uses a shared input layer and two taskspecific output layers Nonetheless, it is still unclear how to effectively decide what weights to share given a network with a set of tasks in interest Instead of choosing between soft sharing or hard sharing approach, a new effort in tackling the multitaskDec 18, 19 · AdaShare Learning What To Share For Efficient Deep MultiTask Learning #1517 Open icoxfog417 opened this issue Dec 18, 19 · 1 comment Open AdaShare Learning What To Share For Efficient Deep MultiTask Learning #1517 icoxfog417 opened this issue Dec 18, 19 · 1 comment Labels CNN ComputerVisionAdaShare Learning What To Share For Efficient Deep MultiTask Learning Introduction Hardparameter Sharing AdvantagesScalable DisadvantagesPreassumed tree structures, negative transfer, sensitive to task weights Softparameter Sharing AdvantagesLessnegativeinterference (yet existed), better performance Disadvantages Not Scalable

Papertalk

Learned Weight Sharing For Deep Multi Task Learning By Natural Evolution Strategy And Stochastic Gradient Descent Deepai

Unlike existing methods, we propose an adaptive sharing approach, called AdaShare, that decides what to share across which tasks to achieve the best recognition accuracy, while taking resource efficiency into accountNov 14, 18 · MultiTask learning is a subfield of Machine Learning that aims to solve multiple different tasks at the same time, by taking advantage of the similarities between different tasks This can improve the learning efficiency and also act as a regularizer which we will discuss in a while Formally, if there are n tasks (conventional deep learningTypical way of conducting multitask learning with deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we propose an adaptive sharing approach, called AdaShare, that decides what

Adashare Learning What To Share For Efficient Deep Multi Task Learning Deepai

Papertalk

Jun 08, 21 · This parameterefficient multitask learning framework allows us to achieve the best of both worlds by sharing knowledge across tasks via hypernetworks while enabling the model to adapt to each individual task through taskspecific adapters Experiments on the wellknown GLUE benchmark show improved performance in multitask learning while19 hours ago · I have a multitask (using keras) network where I have three decoders for different tasks The first decoder is to produce an instance segmentation map The second and third maps are different ndimensional maps;Shayegan Omidshafiei, Jason Pazis, Christopher Amato, Jonathan P How, and John Vian 17 Deep decentralized multitask multiagent reinforcement learning under partial observability In Proceedings of the 34th International Conference on Machine Learning (ICML)Volume 70 2681–2690 Google Scholar Digital Library

Papers Code Mit Ibm Watson Ai Lab

Pdf Stochastic Filter Groups For Multi Task Cnns Learning Specialist And Generalist Convolution Kernels

Nov 27, 19 · AdaShare Learning What To Share For Efficient Deep MultiTask Learning 11/27/19 ∙ by Ximeng Sun, et al ∙ 0 ∙ share Multitask learning is an open and challenging problem in computer vision The typical way of conducting multitask learning with deep neural networks is either through handcrafting schemes that share all initial layers and branch out at an adhoc point or through using separate taskspecificAdaShare Learning What To Share For Efficient Deep MultiTask Learning AdaShare is a novel and differentiable approach for efficient multitask learning that learns the feature sharing pattern to achieve the best recognition accuracy, while restricting theApr 07, · Learning to predict multiple attributes of a pedestrian is a multitask learning problem To share feature representation between two individual task networks, conventional methods like CrossStitch and Sluice network learn a linear combination of

Taskonomy Dataset Papers With Code

Adashare Learning What To Share For Efficient Deep Multi Task Learning Arxiv Vanity

Multitask learning is an open and challenging problem in computer vision The typical way of conducting multitask learningwith deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we propose an adaptive sharing approach, called \textit{AdaShare}, that decides what to shareAdaShare Learning What To Share For Efficient Deep MultiTask Learning Ximeng Sun, Rameswar Panda, Rogerio Feris, Kate Saenko Neural Information Processing Systems (NeurIPS), Project Page Supplementary Material(m,p,q,d) maps where m shows the number of such maps, (p,q) is its height and length, and d is its dimension (1 to 10 for instance)

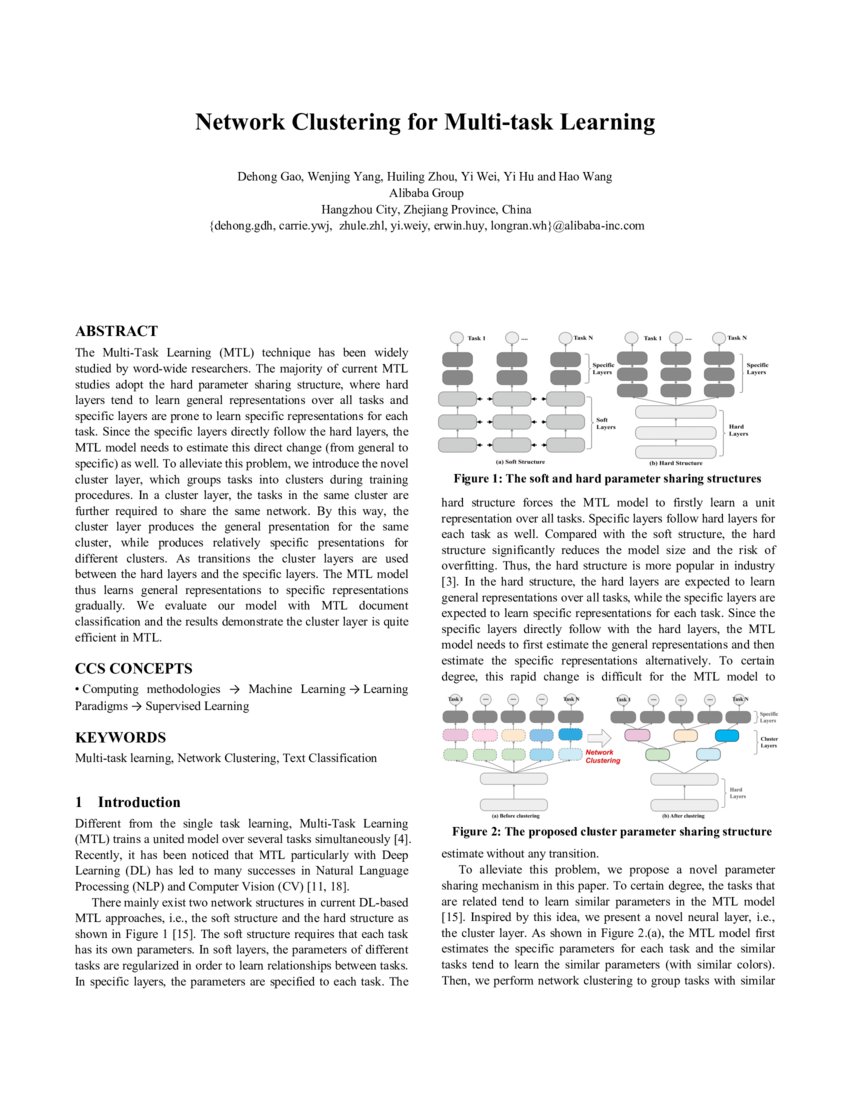

Network Clustering For Multi Task Learning Deepai

Home Rogerio Feris

Machine Learning Transfer & Adaptation & Multitask Learning;Feb 28, 17 · 6 Dec Why Cities Lose The Deep Roots of the UrbanRural Political Divide;Dec 13, 19 · Clustered multitask learning A convex formulation In NIPS, 09 • 23 Zhuoliang Kang, Kristen Grauman, and Fei Sha Learning with whom to share in multitask feature learning In ICML, 11 • 31 Shikun Liu, Edward Johns, and Andrew J Davison Endtoend multitask learning with attention In CVPR, 19

Kate Saenko Proud Of My Wonderful Students 5 Neurips Papers Come Check Them Out Today Tomorrow At T Co W5dzodqbtx Details Below Buair2 Bostonuresearch

A List Of Multi Task Learning Papers And Projects Pythonrepo

Deep learning mit provides a comprehensive and comprehensive pathway for students to see progress after the end of each module With a team of extremely dedicated and quality lecturers, deep learning mit will not only be a place to share knowledge but also to help students get inspired to explore and discover many creative ideas from themselves Clear and detailed trainingXimeng Sun PhD student, Boston University Verified email at buedu Homepage Computer Vision Deep Learning Articles Cited by Public access Coauthors Title Sort Sort by citations Sort by year Sort by titleDeep multitask learning with low level tasks supervised at lower layers Anders Søgaard University of Copenhagen soegaard@humkudk Yoav Goldberg BarIlan University yoavgoldberg@gmailcom Abstract In all previous work on deep multitask learning we are aware of, all task supervisions are on the same (outermost) layer

Dl輪読会 Adashare Learning What To Share For Efficient Deep Multi Task

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Large Scale Neural Architecture Search with Polyharmonic Splines AAAI Workshop on MetaLearning for Computer Vision, 21 X Sun, R Panda, R Feris, and K Saenko AdaShare Learning What to Share for Efficient Deep MultiTask Learning Conference on Neural Information Processing Systems (NeurIPS )MultiTask Deep Reinforcement Learning for Continuous Action Control Machine Learning Reinforcement Learning;9 Dec Sociology Seminar Series;

Adashare Learning What To Share For Efficient Deep Multi Task Learning Papers With Code

Pdf Stochastic Filter Groups For Multi Task Cnns Learning Specialist And Generalist Convolution Kernels

Aug , · A multitask learning (MTL) method with adaptively weighted losses applied to a convolutional neural network (CNN) is proposed to estimate the range and depth of an acoustic source in deep ocean TAmong different tasks, we propose an efficient deep learning architecture called Task Adaptive Activation Network (TAAN) to enable flexible and lowcost multitask learning In TAAN, all the tasks share the weight and bias parameters of the neural network, thus its complexity is similar to that of a single task modelMultitask learning is an open and challenging problem in computer vision The typical way of conducting multitask learning with deep neural networks is either through handcrafting schemes that share all initial layers and branch out at an adhoc point or through using separate taskspecific networks with an additional feature sharing/fusion mechanism

Kate Saenko Proud Of My Wonderful Students 5 Neurips Papers Come Check Them Out Today Tomorrow At T Co W5dzodqbtx Details Below Buair2 Bostonuresearch

Home Rogerio Feris

Click to share on Twitter (Opens in new window) Click to share on Facebook (Opens in new window) AdaShare Learning What To Share For Efficient Deep MultiTask Learning Learning to Retrieve Reasoning Paths over Wikipedia Graph for Question Answering(ICLR underDeep MultiTask Learning with Shared Memory Multitask learning is an approach to learn multi of these multitask architectures is they share some lower layers to determine common features After the shared layers, the remaining layers are split into multiple specic tasksMultitask learning is an open and challenging problem in computer vision The typical way of conducting multitask learning with deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we propose an adaptive sharing approach, calledAdaShare, that decides what to share

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Resskip Block Integrating Feature Maps From The Upscale And Downscale Download Scientific Diagram

Guo et al, CVPR 19 Data Efficiency Transfer Learning §Finetuning is arguably the most widely used approach for transfer learning §Existing methods are adhoc in terms of determining where to fine tune in a deep neural network (eg, finetuning last k layers) §We propose SpotTune, a method that automatically decides, per training example, which layers of a pretrained modelNov 12, · AdaShare Learning What To Share For Efficient Deep MultiTask Learning Ximeng Sun, Rameswar Panda, Rogerio Feris, Kate Saenko link 49 Residual Distillation Towards Portable Deep Neural Networks without Shortcuts Guilin Li, Junlei Zhang, Yunhe Wang, Chuanjian Liu, Matthias Tan, Yunfeng Lin, Wei Zhang, Jiashi Feng, Tong Zhang link 50Polyharmonic Splines AAAI Workshop on MetaLearning for Computer Vision, 21 12 X Sun, R Panda, R Feris, and K Saenko AdaShare Learning What to Share for Efficient Deep MultiTask Learning Conference on Neural Information Processing Systems (NeurIPS ) 13 Y

Home Rogerio Feris

Dl輪読会 Adashare Learning What To Share For Efficient Deep Multi Task

Learned Weight Sharing For Deep Multi Task Learning By Natural Evolution Strategy And Stochastic Gradient Descent Deepai

Kate Saenko Proud Of My Wonderful Students 5 Neurips Papers Come Check Them Out Today Tomorrow At T Co W5dzodqbtx Details Below Buair2 Bostonuresearch

Kdst Adashare Learning What To Share For Efficient Deep Multi Task Learning Nips 논문 리뷰

Kate Saenko On Slideslive

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Adashare Learning What To Share For Efficient Deep Multi Task Learning Issue 1517 Arxivtimes Arxivtimes Github

Auto Virtualnet Cost Adaptive Dynamic Architecture Search For Multi Task Learning Sciencedirect

Auto Virtualnet Cost Adaptive Dynamic Architecture Search For Multi Task Learning Sciencedirect

Adashare Learning What To Share For Efficient Deep Multi Task Learning Issue 1517 Arxivtimes Arxivtimes Github

Branched Multi Task Networks Deciding What Layers To Share Deepai

Neurips Papers

Github Sunxm2357 Adashare Adashare Learning What To Share For Efficient Deep Multi Task Learning

Pdf Stochastic Filter Groups For Multi Task Cnns Learning Specialist And Generalist Convolution Kernels

Dl輪読会 Adashare Learning What To Share For Efficient Deep Multi Task

Papertalk

Dl輪読会 Adashare Learning What To Share For Efficient Deep Multi Task

Papertalk

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Adashare Learning What To Share For Efficient Deep Multi Task Learning Deepai

Auto Virtualnet Cost Adaptive Dynamic Architecture Search For Multi Task Learning Sciencedirect

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Shapeshifter Networks Cross Layer Parameter Sharing For Scalable And Effective Deep Learning Arxiv Vanity

Kdst Adashare Learning What To Share For Efficient Deep Multi Task Learning Nips 논문 리뷰

Adashare Learning What To Share For Efficient Deep Multi Task Learning Request Pdf

Pdf Adashare Learning What To Share For Efficient Deep Multi Task Learning Semantic Scholar

Rogerio Feris On Slideslive

Pdf Exploring Shared Structures And Hierarchies For Multiple Nlp Tasks Semantic Scholar

Computer Vision Archives Mit Ibm Watson Ai Lab

Rogerio Feris On Slideslive

Multi Task Learning And Beyond 过去 现在与未来 知乎

Adashare Learning What To Share For Efficient Deep Multi Task Learning Deepai

0 件のコメント:

コメントを投稿